Recent advances in AI have yielded improvements in its use for the detection of glaucomatous progression. Specifically, new algorithms show potential for detecting ongoing worsening and forecasting future visual field deterioration. Additionally, models show promise in using OCT imaging to identify functional (visual field) worsening without the need for onerous visual field testing. This article explores the potential uses of AI in glaucoma care and the steps toward implementing these models in clinical practice.

FORECASTING VISUAL FIELD WORSENING

A 2014 study by Chauhan et al1 showed that approximately 5% to 10% of patients under routine glaucoma care have rapidly progressing disease, according to their rate of visual field change. In these cases, visual field progression occurred at a mean deviation rate of less than -1 dB per year. What if there were a way to use early data to identify eyes at such risk of damage without having to wait several years for the trends to become apparent on visual field testing? This could enable earlier intervention, help to prevent vision loss from glaucoma, and confirm the need for closer monitoring of some patients (eg, every 2–3 months vs every 6 months–1 year).

My team and I created an AI model to forecast rapidly worsening glaucoma using early data. The model takes in a patient’s early visual field tests (from one to three tests) and their first OCT scan, combines them with the patient’s first set of clinical information (IOP, visual acuity, and demographics), and forecasts their risk of future rapid glaucomatous progression. In a data set of more than 4,000 eyes with longitudinal visual field data, we found that, when the model took in the patient’s first visual field test, OCT scan, and clinical/demographic information, it achieved an area under the curve (AUC) of nearly 0.8. (AUC is a summary measure that includes sensitivity and specificity for all thresholds.) When we included two or three subsequent visual field tests, the AUC increased to greater than 0.8, which suggests that such models may perform well enough to be deployed in a clinical setting with further validation.

DETECTING VISUAL FIELD WORSENING

My colleagues and I also explored whether we could use AI to detect worsening on visual field tests that were being acquired over time during normal patient care. One challenge with visual field testing is that there are many definitions of worsening. We have various trend-based methods to detect worsening, such as following mean deviation or the visual field index over time. We also have various event-based methods to detect visual field worsening, such as the Glaucoma Progression Analysis software. Figure 1 shows an UpSet plot, which essentially tries to compare all these methods and locate the intersection between them to label an eye as worsening on visual field testing. We found that, in a set of 8,000 eyes with seven visual field tests, there was little agreement between methods.

Figure 1. A comparison of methods to detect visual field worsening shows little agreement.

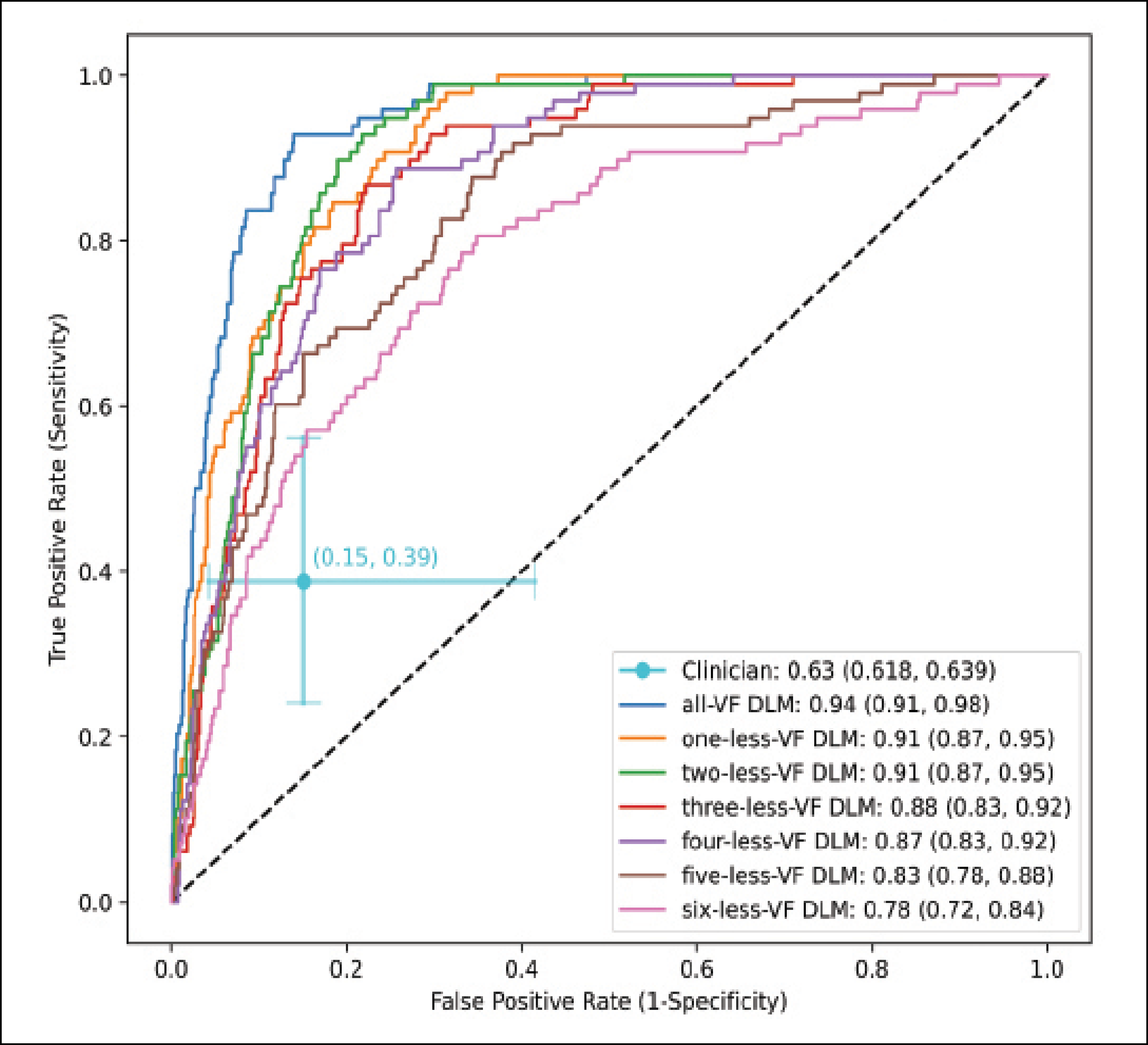

We questioned whether we could create a flexible model that takes in the various definitions of visual field worsening and train that model to use a consensus of methods to accurately detect worsening (Figure 2). We took 8,000 eyes with seven visual field tests conducted over time, and we gave the model both point-wise data (eg, the visual field threshold values at each point) and the global metrics from the visual field (eg, mean deviation and the reliability indices). We fed these into a sequence model that predicted the probability that the visual field was deteriorating. For each eye, a clinician also labeled any detected worsening on the last visual field test in the patient’s electronic health record. We found that the clinician’s performance had an AUC of about 0.6, whereas the deep learning model achieved an AUC of more than 0.9. When we gave the model fewer visual field tests, it still performed very well—in general, the AUC of the model’s performance was greater than 0.8 (Figure 3).

Figure 2. A flexible model trained on a consensus of ways to detect visual field worsening.

Figure 3. An AI model designed to predict visual field worsening outperformed a clinician (AUC 0.9 vs 0.6). The AI model still performed well when given less diagnostic information.

USING OCT TO IDENTIFY FUNCTIONAL WORSENING

Visual field testing remains the gold standard for identifying glaucomatous progression in clinical trials and glaucoma clinics, but OCT imaging is a more efficient, patient-friendly, and often more reliable modality. If we could use OCT to identify eyes that are likely to undergo visual field changes, then we could reduce the number of visual field tests required.

My colleagues and I identified 4,211 eyes with at least five paired peripapillary OCT scans and visual field tests. We labeled eyes with visual field worsening using the consensus method previously described. We then took an AI model that uses OCT data alone to predict which eyes will experience visual field worsening. Again, the input for the model was serial OCT data, meaning a sequence of five or more OCT scans. Using a gated-transformer network architecture, we predicted the probability that a patient’s visual field would worsen over time. We found that, using the consensus method to define visual field worsening, the AUC was greater than 0.9; using various methods to detect visual field worsening, the AUC was still greater than 0.8. These models can be trained with flexible definitions of visual field worsening.

INTEGRATING AI INTO CLINICAL PRACTICE

Although our data suggest a strong potential for these forecasting and detection models, several steps must be taken before AI can be incorporated into the clinical workflow. First, the models must be externally validated. This entails collaborating with various academic centers and private practices around the world to ensure that the results are generalizable and can be applied to patients across a wide range of practice settings and disease severities.

Once the models are externally validated, the next challenge is implementing them in practice. When developing an AI model, there are too many parameters to input into a web-based model, such as that used for the Ocular Hypertension Treatment Study (OHTS) calculator. A more effective approach may be to incorporate AI models into our clinical workflows, enabling us to detect trends in visual field and OCT data over time. Then, an AI rapid progressor risk score or an AI visual field worsening index could be automatically displayed for the clinician when these data are processed.

PREDICTIONS

I expect AI clinical decision-making assistants to become more commonplace in glaucoma care over the next 5 to 10 years, and this has the potential to improve patient care and increase clinical efficiency. If patients at high risk of or experiencing worsening can be identified earlier, more aggressive intervention can be initiated to reduce their risk of permanent vision loss. If AI glaucoma risk scores (both for forecasting future worsening and detecting ongoing worsening) are available to eye care providers, they can help identify which patients need to be observed more routinely with less frequent testing and which patients should be observed more frequently or referred to glaucoma specialists, resulting in a more efficient allocation of health care resources.

1. Chauhan BC, Malik R, Shuba LM, et al. Rates of glaucomatous visual field change in a large clinical population. Invest Ophthalmol Vis Sci. 2014;55(7):4135-4143.